Edge AI: What It Is, How It Works & Why Every Smart Device Needs It

Edge AI is quietly powering the devices around you — from your smartphone camera to factory robots and self-driving cars. Here is your complete, plain-English guide to understanding Edge AI and why it is one of the most important tech shifts of 2026.

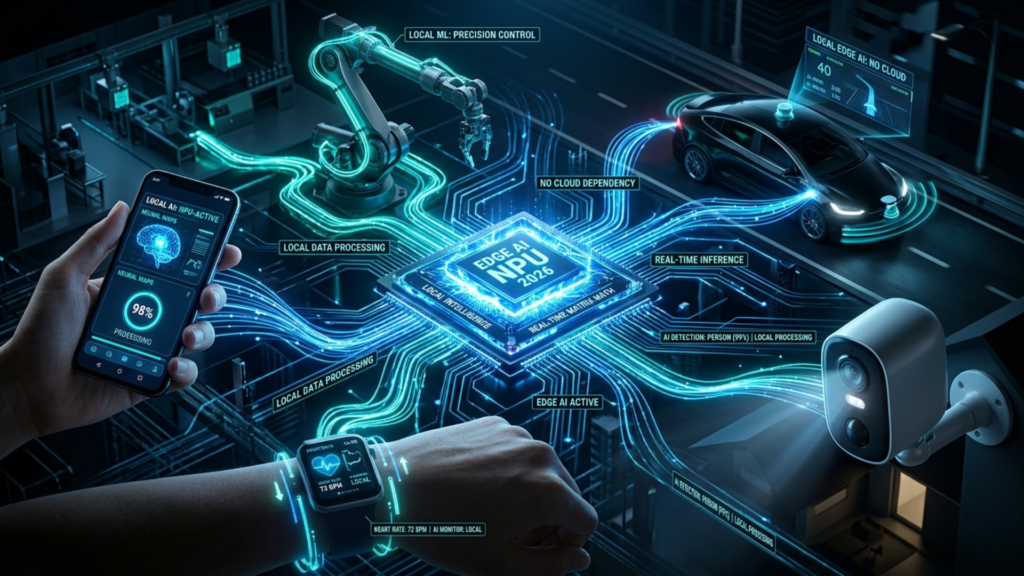

Edge AI is the technology that allows artificial intelligence to think, decide, and act directly on a device — without sending data to a distant cloud server first. Your smartphone recognizing your face to unlock the screen, a factory machine spotting a defect on an assembly line in real time, a smart security camera detecting an intruder before the footage even reaches the internet — all of these are Edge AI in action. And in 2026, Edge AI has moved from a niche engineering concept to one of the most strategically important technology trends shaping every connected industry on the planet.

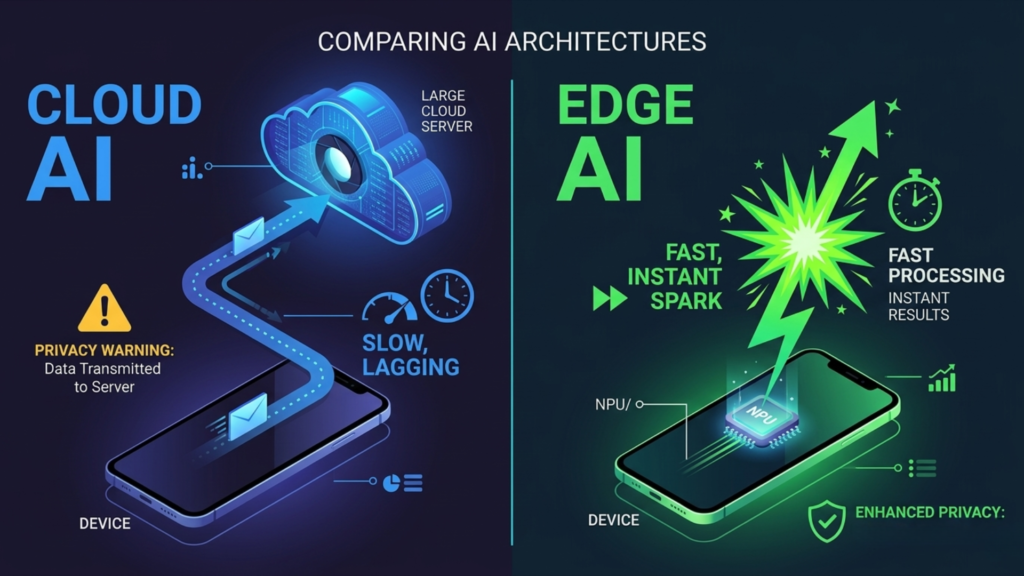

For years, AI lived almost entirely in the cloud. You asked a question, the request traveled to a massive data center, the model processed it, and the answer came back. That model works well for many applications — but it has real limitations. It requires a reliable internet connection. It introduces latency. It raises serious privacy concerns. And it places enormous demands on network bandwidth and cloud infrastructure.

Edge AI solves all of these problems at once — by moving the intelligence from the cloud to the device itself. In this article, we explain exactly how it works, where it is already deployed, why it matters so much, and what businesses and professionals need to know to stay ahead.

What Is Edge AI? Understanding the Core Concept

To understand Edge AI, you need to understand the difference between the cloud and the edge.

The cloud refers to remote data centers — massive facilities housing thousands of servers that store data and run computations on behalf of connected devices. When you use a voice assistant, stream a video, or run a web-based AI tool, you are using the cloud. The intelligence lives far away from you, and your device is just a window into it.

The edge refers to the devices and systems closest to where data is actually generated — your smartphone, a factory sensor, a hospital monitoring system, a traffic camera, an autonomous vehicle. These devices sit at the edge of the network, right at the point where real-world data enters the digital world.

Edge AI combines these two concepts by deploying AI models directly on edge devices, so that intelligence operates locally — processing data on the device itself rather than shipping it to a cloud server for analysis.

Edge AI means the intelligence moves to where the data is born — not the other way around. The result is faster decisions, stronger privacy, and AI that works even when the internet does not.

This shift is made possible by a new generation of specialized chips — called AI accelerators or neural processing units (NPUs) — that are compact, energy-efficient, and powerful enough to run sophisticated AI models on devices as small as a smartphone or an industrial sensor. Apple’s Neural Engine, Qualcomm’s AI Engine, and Google’s Tensor chip are all examples of this hardware revolution already in your pocket.

Edge AI vs Cloud AI: What Is the Real Difference?

Both Edge AI and Cloud AI have their place — but understanding the distinction helps clarify why Edge AI is growing so rapidly and why it is not simply a replacement for cloud-based AI but a complementary and increasingly essential layer.

Speed and Latency

Cloud AI requires data to travel from the device to a remote server and back. Even on fast connections, this round trip introduces latency — delays measured in milliseconds that are acceptable for many applications but catastrophic for others. A self-driving car that needs to decide whether to brake in the next 50 milliseconds cannot afford to wait for a cloud response. An industrial robot detecting a dangerous fault in real time cannot pause for a network round trip. Edge AI eliminates this latency entirely by processing everything locally.

Privacy and Data Security

Every time data leaves a device and travels to the cloud, it passes through networks and infrastructure that create privacy and security exposure. For sensitive applications — medical diagnostics, personal biometrics, financial monitoring, private communications — sending raw data to a cloud server is a significant liability. Edge AI keeps sensitive data on the device, where it is processed locally and never transmitted. This fundamentally changes the privacy calculus for a wide range of applications.

Connectivity Independence

Cloud AI is only as reliable as the internet connection it depends on. In remote locations, on aircraft, in underground facilities, in disaster response scenarios, or simply in areas with unreliable connectivity, cloud AI fails. Edge AI continues to operate regardless of network availability. For mission-critical applications in challenging environments, this independence is not a convenience — it is a requirement.

Bandwidth and Cost Efficiency

Modern IoT deployments can involve thousands or even millions of sensors generating continuous streams of data. Sending all of that raw data to the cloud for processing is extraordinarily expensive in both bandwidth costs and cloud computing fees. Edge AI allows devices to process data locally, sending only meaningful results or exceptions to the cloud — dramatically reducing data transmission volumes and associated costs.

Edge AI Use Cases: Where It Is Already Making an Impact

Edge AI is not a future technology — it is deployed at scale right now across dozens of industries. Here are the most significant real-world applications:

Smartphones and Consumer Devices

The most widespread Edge AI deployment in the world is already in your pocket. Modern smartphones use on-device AI for facial recognition, computational photography, real-time language translation, voice recognition, health monitoring, and predictive text. Apple’s Face ID, Google’s computational camera features, and Samsung’s live translation all run entirely on-device. No cloud required. The experience is instant, private, and works offline.

Manufacturing and Industrial IoT

Edge AI is transforming factory floors. Vision systems powered by on-device AI inspect products for defects at production speeds that no human inspector could match — and with a level of consistency that eliminates the variability inherent in human attention. Predictive maintenance systems analyze vibration, temperature, and acoustic data from machinery in real time, identifying failure patterns before a breakdown occurs and scheduling maintenance proactively. The result is dramatically reduced downtime and extended equipment life.

Healthcare and Medical Devices

In healthcare, Edge AI is enabling a new generation of intelligent medical devices. Wearable monitors use on-device AI to continuously analyze heart rhythm, blood oxygen, glucose levels, and other vitals — alerting wearers and clinicians to anomalies in real time without transmitting a continuous stream of sensitive health data to the cloud. Portable diagnostic devices in remote or low-resource settings can perform AI-powered analysis without any internet connection, bringing sophisticated diagnostic capability to locations that could never support cloud-dependent solutions.

Autonomous Vehicles and Transportation

Self-driving vehicles represent perhaps the most demanding Edge AI application in existence. A modern autonomous vehicle processes data from dozens of sensors — cameras, LIDAR, radar, GPS — and must make safety-critical decisions in milliseconds. There is no architecture in which cloud dependency is acceptable here. Every perception, object recognition, and navigation decision runs on powerful on-board computing systems. Edge AI is not just preferred for autonomous vehicles — it is the only viable option.

Retail and Smart Stores

Retailers are deploying Edge AI to transform the in-store experience. Computer vision systems analyze shopper movement patterns and dwell times to optimize store layouts and product placement. Checkout-free store concepts use arrays of cameras and weight sensors powered by Edge AI to track what customers pick up and automatically charge them when they leave — no cashier, no queue, no friction. All of this processing happens locally, in real time, without routing footage through the cloud.

Smart Cities and Public Infrastructure

Traffic management systems use Edge AI to analyze camera feeds at intersections in real time, adjusting signal timing dynamically to optimize traffic flow without transmitting video to a central server. Smart street lighting adjusts brightness based on pedestrian and vehicle presence detected by local sensors. Public safety systems can detect unusual crowd behaviors or incidents and alert authorities immediately, with sensitive video data staying local rather than flowing into centralized surveillance infrastructure.

The Edge AI Hardware Revolution Driving It All

The explosion of Edge AI deployments is not happening by accident — it is being driven by a fundamental shift in hardware capabilities. A new generation of chips specifically designed for on-device AI inference is making it possible to run models that would have required a data center just five years ago on devices small enough to wear on your wrist.

Neural Processing Units (NPUs)

NPUs are specialized processors designed specifically to accelerate the matrix multiplication operations that underpin neural network inference. Unlike general-purpose CPUs or even GPUs, NPUs are optimized for a narrow set of operations — which means they can perform AI inference tasks with dramatically higher efficiency in terms of both speed and power consumption. Every major smartphone chip now includes an NPU, and they are increasingly appearing in laptops, IoT devices, and industrial hardware.

Model Optimization Techniques

Running AI models on constrained edge hardware requires more than just better chips — it requires making the models themselves smaller and more efficient without sacrificing too much performance. Techniques like quantization (reducing the numerical precision of model weights), pruning (removing unnecessary connections from neural networks), and knowledge distillation (training small models to replicate the behavior of large ones) have matured significantly, making it possible to deploy capable AI on devices with very limited compute and memory.

Edge AI Challenges Businesses Need to Plan For

Edge AI is powerful — but deploying it at scale comes with real challenges that organizations must plan for carefully:

- Model management complexity: Deploying AI models across thousands or millions of edge devices creates significant model management challenges. How do you update models when they need to be retrained? How do you monitor performance across a distributed fleet of devices? How do you ensure consistency when devices have different hardware specifications? These are engineering and operations problems that require dedicated solutions.

- Hardware fragmentation: The edge device ecosystem is extraordinarily diverse — different chips, operating systems, memory constraints, and power budgets. Developing AI applications that run well across this fragmented landscape requires careful architecture decisions and rigorous testing across device categories.

- Security at the edge: While Edge AI improves data privacy by keeping sensitive data local, it also creates new security challenges. Edge devices are physically accessible in ways that cloud servers are not — they can be stolen, tampered with, or reverse-engineered. Protecting AI models and the data they process at the edge requires robust device security measures, including secure enclaves, encrypted storage, and tamper detection.

- Limited compute and power budgets: Despite remarkable hardware advances, edge devices still have significantly less compute power and energy budget than cloud servers. Model design and optimization must account for these constraints from the beginning — not as an afterthought.

- Data quality at the edge: Edge AI models are only as good as the data they are trained on — and deploying them in real-world edge environments often exposes gaps between training data and real conditions. Continuous monitoring for model drift and performance degradation is essential.

How to Get Started with Edge AI in Your Organization

Whether you are a business leader evaluating Edge AI for your operations or a developer looking to build edge-intelligent products, here is a practical path forward:

- Identify Latency-Sensitive or Privacy-Critical Use Cases

Start by identifying the applications in your business where cloud AI’s latency or privacy limitations create real problems. These are your best starting points for Edge AI deployment. Real-time quality inspection, on-device biometrics, offline-capable mobile applications, and sensitive data analysis are all strong candidates.

- Evaluate Your Hardware Landscape

Understand the computing capabilities of the devices at your edge. What chips are they running? How much memory do they have? What is their power budget? This determines what AI models are viable for your environment and guides your model selection and optimization strategy.

- Choose the Right Model Optimization Approach

Work with your AI team or partners to select appropriate model compression techniques for your use case. Frameworks like TensorFlow Lite, PyTorch Mobile, and ONNX Runtime are purpose-built for deploying optimized models on edge devices and provide excellent starting points for most Edge AI projects.

- Build a Model Lifecycle Management Plan

Plan from day one how you will update, monitor, and retire AI models on your edge devices. Over-the-air update mechanisms, remote performance monitoring, and automated anomaly detection for model drift should be part of your architecture from the beginning. - Start Small, Prove Value, Then Scale

Run a focused pilot in a controlled environment before committing to large-scale Edge AI deployment. Prove the value proposition — whether that is latency reduction, cost savings, privacy improvement, or reliability gains — with real data before scaling.

Final Thoughts: Edge AI Is the Intelligence Layer of the Physical World

Edge AI represents something genuinely profound: the moment when artificial intelligence stops living only in data centers and starts living in the physical world around us — in our devices, our vehicles, our factories, our hospitals, and our cities.

The implications of this shift are enormous. It means AI that is faster, more private, more reliable, and more accessible in the environments where it matters most. It means intelligent devices that do not need the internet to be smart. It means a world where the physical and digital layers of intelligence are woven together at the point of experience rather than separated by a network round trip.

We are still early in the Edge AI story. The hardware is maturing rapidly, the software ecosystem is catching up, and the business cases are multiplying every quarter. The organizations that understand Edge AI, invest in the right capabilities, and deploy it thoughtfully today will be the ones building the intelligent products and services that define their industries tomorrow.