AI-Powered Cybersecurity: How Artificial Intelligence Is Winning the War Against Cyber Threats

Cyberattacks are faster, smarter, and more destructive than ever. The only way to fight AI-powered threats is with AI-powered cybersecurity — and in 2026, the organizations that understand this are the ones that are not making the headlines for the wrong reasons.

AI-powered cybersecurity is no longer a futuristic concept — it is the frontline reality of how modern organizations defend themselves against an ever-escalating wave of digital threats. The numbers tell a stark story: cybercrime is projected to cost the global economy over 10 trillion dollars annually by 2026, cyberattacks are occurring at a rate of one every 39 seconds, and the average time to detect a breach without AI assistance stretches to over 200 days. Human security teams, no matter how skilled, simply cannot process the volume, velocity, and complexity of modern threat data fast enough to keep pace.

At the same time, the attackers themselves have embraced artificial intelligence. AI is being used to automate the discovery of vulnerabilities, craft hyper-personalized phishing emails that fool even security-aware employees, generate polymorphic malware that changes its signature to evade detection, and coordinate large-scale attacks with a speed and precision that no human operation could match.

The result is a new kind of conflict — AI versus AI — playing out across enterprise networks, critical infrastructure, and government systems around the world every single day. In this article, we break down exactly how AI-powered cybersecurity works, what it can do that traditional security tools cannot, where it is being deployed right now, and what every organization needs to understand to stay protected in this new era of intelligent cyber warfare.

Why AI-Powered Cybersecurity Is No Longer Optional

To understand why AI has become indispensable in cybersecurity, you need to understand the fundamental mismatch between the scale of modern threats and the capacity of traditional defenses.

A large enterprise organization generates billions of security events every single day — log entries, network connections, authentication attempts, file system changes, API calls, and endpoint activities all creating a continuous torrent of data that needs to be analyzed for signs of malicious activity. A typical Security Operations Center staffed by skilled human analysts can meaningfully investigate perhaps a few thousand alerts per day. The gap between what needs to be analyzed and what humans can analyze is not a staffing problem — it is a mathematical impossibility that no amount of hiring can solve.

Traditional rule-based security tools try to bridge this gap by applying predefined signatures and rules to filter out noise and flag known threats. These tools are valuable but fundamentally limited: they can only detect threats that match patterns someone has already anticipated and encoded into a rule. Against novel attacks, zero-day exploits, and sophisticated adversaries who deliberately design their techniques to evade known signatures, rule-based tools are blind.

Traditional security tools are looking for criminals who match a wanted poster. AI-powered cybersecurity is looking for anyone who is behaving strangely — whether or not there is a poster for them yet.

AI-powered cybersecurity solves both problems simultaneously. It can process billions of events in real time, far beyond any human capacity. And instead of matching known patterns, it learns what normal looks like across your entire environment — and detects deviations from that normal, even when the attack technique is completely novel.

How AI-Powered Cybersecurity Works: The Core Technologies

AI-powered cybersecurity is not a single technology — it is a collection of complementary AI and machine learning techniques applied to different aspects of the security problem. Here are the most important:

Behavioral Analytics and Anomaly Detection

The most powerful and widely deployed AI cybersecurity capability is behavioral analytics — using machine learning to build detailed models of normal behavior for every user, device, application, and network segment in an environment, and then continuously monitoring for deviations from those baselines. When a user who normally works from London at business hours suddenly authenticates from Southeast Asia at 3am and begins downloading large volumes of sensitive files, a behavioral analytics system flags this immediately — even if the credentials used are completely legitimate and no malware signature is present. This is how AI catches the attacks that traditional tools miss entirely.

Threat Intelligence and Predictive Analysis

AI systems ingest and correlate threat intelligence from thousands of sources simultaneously — commercial threat feeds, government advisories, dark web monitoring, honeypot data, and telemetry from millions of protected endpoints around the world. Machine learning models identify patterns and connections across this vast dataset that no human analyst could detect, predicting which vulnerabilities are most likely to be exploited next, which threat actors are likely targeting organizations in your sector, and what attack techniques are trending. This predictive capability allows security teams to prioritize defenses proactively rather than reacting after the fact.

Automated Threat Detection and Response

Speed is everything in incident response. Every minute an attacker spends undetected inside a network is a minute they can use to escalate privileges, exfiltrate data, move laterally, and establish persistence. AI-powered security orchestration and automated response systems can detect a threat, correlate it with contextual intelligence, assess its severity, and execute a containment response — isolating a compromised endpoint, blocking a malicious IP, revoking a compromised credential — in seconds rather than the hours or days it would take a human-driven process. This speed differential is the difference between a contained incident and a catastrophic breach.

Natural Language Processing for Phishing Detection

Phishing emails remain the most common initial attack vector, responsible for the majority of breaches. AI-powered email security systems use natural language processing to analyze the content, tone, structure, and context of incoming messages — going far beyond the domain blacklists and attachment scanning of traditional email filters. These systems detect subtle linguistic patterns that indicate social engineering attempts, identify when an email is impersonating a known contact, and flag requests for sensitive information or urgent action that match phishing playbooks — even when the email comes from a domain that has never been flagged before.

AI-Powered Vulnerability Management

Modern enterprise environments contain thousands of systems, each potentially running hundreds of software components, any of which might contain exploitable vulnerabilities. Traditional vulnerability management approaches — scan everything, produce a list, patch in order of severity score — generate overwhelming backlogs and miss the real-world context of which vulnerabilities are actually being actively exploited. AI-powered vulnerability management correlates vulnerability data with real-time threat intelligence to prioritize remediation based on actual exploitation probability in your specific environment, ensuring that limited patching resources are focused where they matter most.

AI-Powered Cybersecurity in Action: Real-World Deployments

AI cybersecurity is not a theoretical capability — it is actively defending organizations across every sector right now. Here are some of the most significant real-world examples:

Financial Services — Fraud Detection at Microsecond Speed

Payment networks and banks process hundreds of millions of transactions every day. AI fraud detection systems analyze each transaction in real time — typically in under 100 milliseconds — assessing dozens of behavioral and contextual signals to determine whether a transaction is legitimate or fraudulent. These systems catch fraud patterns that emerge and evolve in real time, adapting continuously to new attack techniques without requiring manual rule updates. Major card networks report that AI-powered fraud detection prevents billions of dollars in losses annually that rule-based systems would have missed.

Endpoint Detection and Response

AI-powered endpoint detection and response platforms monitor every process, file system change, network connection, and registry modification on every protected device — continuously, in real time. When behavior consistent with malware execution, credential theft, or lateral movement is detected, the system can automatically isolate the affected endpoint, preserve forensic evidence, and alert analysts with a complete timeline of the attack chain. This capability has transformed incident response from a days-long manual investigation into an automated process measured in minutes.

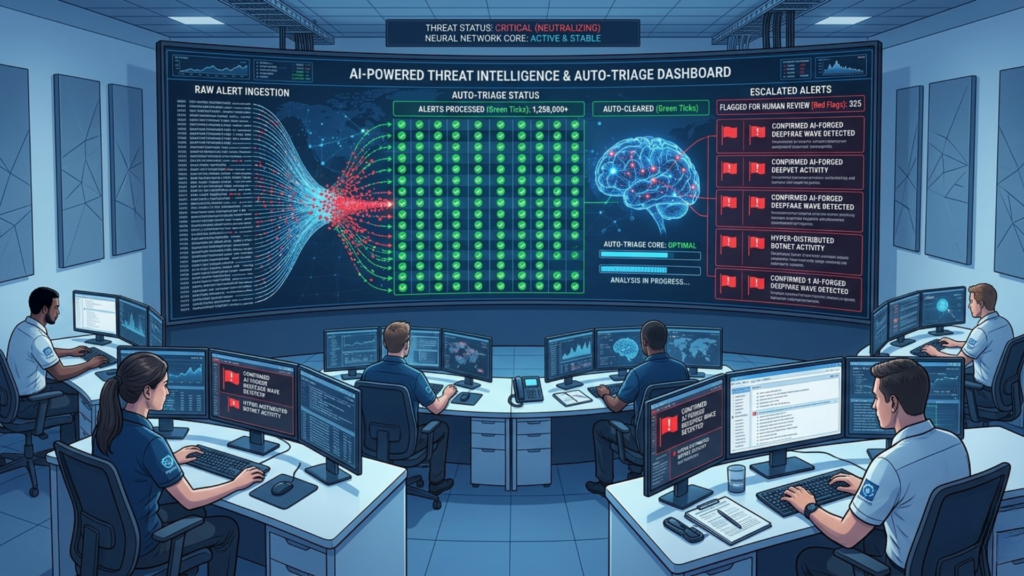

Security Operations Center Augmentation

Forward-thinking organizations are deploying AI as a force multiplier for their human Security Operations Center analysts. AI systems handle the first-level triage of the overwhelming volume of security alerts — automatically investigating, correlating, and dismissing false positives, and escalating only the alerts that genuinely warrant human attention, enriched with full context and recommended response actions. Analysts who previously spent most of their time chasing false positives now focus almost entirely on genuine threats, dramatically improving both security outcomes and analyst job satisfaction.

Government and Critical Infrastructure Protection

National cybersecurity agencies in the United States, United Kingdom, European Union, and elsewhere are deploying AI systems to monitor the security of critical national infrastructure — power grids, water systems, financial markets, and government networks. These systems provide continuous visibility across vast, complex environments that would be impossible to monitor effectively with human-only teams, detecting the early indicators of nation-state attack campaigns that often precede major incidents by weeks or months.

The Dark Side: How Attackers Are Using AI-Powered Cybersecurity Against You

Any honest discussion of AI in cybersecurity must acknowledge that the same capabilities being used for defense are also being weaponized for attack. Understanding how adversaries are using AI is essential for building effective defenses:

- AI-generated phishing: Large language models can generate thousands of highly personalized, grammatically perfect phishing emails at essentially zero marginal cost — each tailored to a specific target based on their publicly available information. The days of poorly written phishing emails full of grammatical errors are over. AI-generated spear phishing is nearly indistinguishable from legitimate communication.

- Automated vulnerability discovery: AI tools can scan software and network configurations for exploitable vulnerabilities far faster and more thoroughly than human penetration testers — and attackers have access to these tools too. The time between the discovery of a vulnerability and the first exploit in the wild has compressed from months to days or even hours.

- Polymorphic and evasive malware: AI is being used to generate malware that continuously mutates its code signature to evade detection by signature-based security tools. Each instance is slightly different, making traditional pattern-matching detection ineffective.

- Deepfake social engineering: AI-generated voice and video deepfakes are being used in sophisticated fraud campaigns — impersonating executives to authorize fraudulent wire transfers, impersonating IT staff to obtain credentials, and creating fake video evidence to manipulate targets. Several high-profile cases of deepfake-enabled fraud have resulted in losses of millions of dollars.

- AI-powered reconnaissance: Before launching an attack, sophisticated adversaries use AI tools to automatically map an organization’s attack surface — identifying public-facing systems, employee information, technology stack details, and relationships that can be exploited for social engineering or technical attacks.

The most important insight in AI cybersecurity is this: you cannot fight AI-powered attacks with human-speed defenses. The only viable response to AI on the attack is AI on the defense.

Challenges and Limitations of AI-Powered Cybersecurity

AI-powered cybersecurity is powerful — but it is not perfect. Organizations need a clear-eyed understanding of its limitations:

- False positives and alert fatigue: Even sophisticated AI systems generate false positive alerts. If tuning is poor or the system is overly sensitive, security teams can still face overwhelming alert volumes — now generated by AI rather than traditional rules. Careful tuning, continuous improvement, and human oversight remain essential.

- Adversarial AI attacks: Just as AI can be used to detect attacks, attackers can use AI to probe and understand the detection models deployed against them — gradually learning what behaviors trigger alerts and adapting to avoid them. This adversarial dynamic means AI security models must be continuously updated and retrained.

- Data quality dependencies: AI security systems are only as good as the data they learn from. Incomplete, inconsistent, or biased training data produces models with blind spots that attackers can exploit. Investing in data quality is not optional — it is foundational.

- Explainability challenges: When an AI system flags a threat or blocks an action, security teams and business stakeholders sometimes need to understand why. Some AI models — particularly deep learning systems — can be difficult to explain in human terms, creating friction in environments where decisions need to be auditable and justifiable.

- Over-reliance risk: AI cybersecurity tools are powerful force multipliers — but they are not replacements for skilled human judgment. Organizations that treat AI as a fully autonomous security solution and reduce human oversight proportionally are creating new vulnerabilities. The most resilient security postures combine AI speed and scale with human expertise and contextual judgment.

Building an AI-Powered Cybersecurity Strategy for Your Organization

Whether you are a CISO planning a major security transformation or a business leader trying to understand where to invest, here is a practical framework for building AI cybersecurity capabilities:

- Assess Your Current Detection and Response Gap

Start by honestly evaluating your current mean time to detect and mean time to respond for security incidents. These metrics tell you exactly how much your current security posture depends on human speed and capacity — and therefore how much AI augmentation could improve your outcomes. Organizations with detection times measured in weeks or months have the most to gain from AI investment.

- Prioritize Use Cases by Risk and Feasibility

Not every AI cybersecurity capability delivers equal value for every organization. Prioritize based on your specific threat profile. Organizations with large remote workforces should prioritize behavioral analytics for identity and endpoint monitoring. Organizations processing high volumes of financial transactions should prioritize AI fraud detection. Organizations with complex cloud environments should prioritize AI-powered cloud security posture management.

- Invest in Quality Data Infrastructure

AI security systems require clean, comprehensive, well-integrated data to function effectively. Before deploying AI security tools, ensure that your logging infrastructure is complete, your data is being collected consistently across all environments, and your data pipelines are reliable. AI built on poor data will underperform regardless of how sophisticated the algorithms are.

- Maintain Strong Human Oversight

Define clearly which security decisions AI systems can execute autonomously and which require human approval. Automated containment of clearly malicious activity — isolating a device actively executing ransomware, for example — is appropriate for AI autonomy. Decisions with significant business impact or ambiguous threat signals should always involve human review. Build these governance boundaries into your AI security architecture from the start. - Measure, Tune, and Improve Continuously

AI security systems improve with use — but only if they are actively managed. Invest in the operational processes to regularly review AI performance, tune models based on false positive and false negative feedback, retrain on new threat data, and measure improvement in key security metrics over time. AI cybersecurity is not a deployment — it is an ongoing capability that requires continuous investment to remain effective. Final Thoughts: AI-Powered Cybersecurity Is the Only Path Forward

The cybersecurity landscape of 2026 is one where AI-powered attacks are already operating at a scale, speed, and sophistication that makes human-only defense fundamentally insufficient. The choice organizations face is not whether to adopt AI-powered cybersecurity — it is how quickly they can build the capabilities, culture, and governance needed to deploy it effectively.

The organizations leading in AI-powered cybersecurity today are not just better protected against current threats — they are building the adaptive, intelligence-driven security foundations that will serve them as threats continue to evolve. Every dollar invested in AI security capabilities today is worth multiples of what reactive breach response will cost tomorrow.

The war between AI attackers and AI defenders is already underway. The only losing strategy is to show up without AI on your side.